Energy Efficiency | July 23, 2020

Beyond PUE: Data Center Cooling Efficiency

Today’s engineers design modern data centers with state-of-the-art cooling infrastructure, taking into account the vast power needed to keep them cool. This usually means three things: (1) selecting the most efficient equipment, (2) implementing best practices for cooling system and data hall design and (3) ensuring servers are cooled as efficiently as possible. This was not always the case.

While many companies are moving to the cloud, hundreds of legacy data center sites are still up and running. Many of these colocation cloud providers rely on 10-15+ year old data centers. These are the sites that face enormous demands on cooling systems struggling to keep up with increasing IT loads.

If I’ve seen anything in the hundreds of data centers I’ve audited however, it’s that every site is different. Cooling infrastructure efficiency can vary widely, therefore the industry came up with the metric known as PUE (Power Usage Effectiveness) to benchmark efficiency and engineer optimization projects and control system integrations.

We will discuss the positives of this metric and another alternative that may make better sense when looking at cooling efficiency in data centers.

Why we have PUE

The Green Grid published the PUE metric in 2007 and there’s a reason we’re still talking about it today – and debating its limitations. Because PUE began as best way to measure “infrastructure energy efficiency in data centers ” (The Green Grid, p.3), you should understand what your options – and opportunities – are with real time PUE data and moving beyond just a metric to better operations.

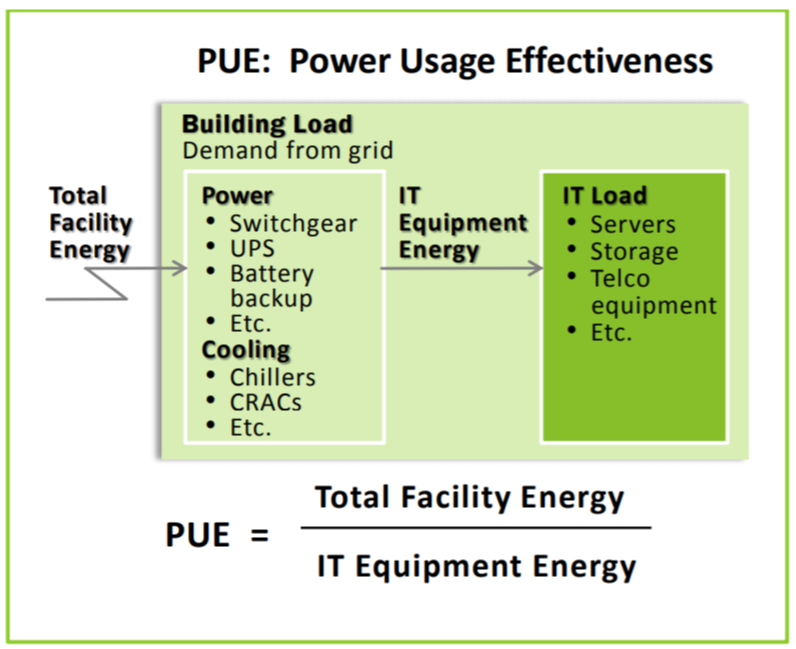

PUE is simply calculated by dividing total facility energy by IT equipment energy in a data center building.

The best PUE is 1.0 – this would mean all the energy in the data center is used for IT equipment only. This is a hypothetical figure. Some energy is obviously required to run the cooling systems and other equipment needed in a facility. However, getting as close to 1.0 as possible is the goal.

Most data center sites we visit (typically older facilities with some legacy equipment and configuration) hover between the 2.0-3.0 mark – meaning they’re using 2-3x the amount of power nominally needed for the IT equipment.

Calculating PUE in data centers

You can use your own figures to determine the efficiency of your cooling infrastructure. Here’s the ratio:

PUE = Total Facility Energy / IT Equipment Energy

Photo source: The Green Grid

PUE and its limitations

PUE was a good, first approach to coming up an overall metric to understand efficiency in a data center. In 2013, a sustainability strategist for IO identified the two leading limitations of PUE for Data Center Knowledge. He narrowed it down to:

-

PUE cannot distinguish how well the systems are working, simply how much power is being allocated to them; and,

-

PUE is unreliable when comparing data center sites. It cannot account for variables such as size, location, data sets, design etc.

He ultimately made the case for real-time PUE. Nearly 10 years later, we regularly integrate control systems in facilities to enable data center managers to monitor, view and act on their own real-time PUE data.

For most facilities however, the majority of their energy use comes from the HVAC systems. This means we approach PUE with one more important distinction from its original formula:

The amount of HVAC energy use vs IT load is the most important metric to understand inside a data center.

Eliminating all of the other energy use captured in the “Total Facility Energy” portion of the original PUE calculation gives a much clearer understanding of HVAC system efficiency opportunities to achieve an optimal operating environment.

Beyond airflow management: how to further lower PUE

Airflow management best practices have long been touted for lowering energy use by more efficiently cooling a server room. Physical best practices – containment, grommets, perforated tiles, blanking panels, etc. – also require strategy and controls to be effective and really impact PUE.

Taking the delta between the energy from HVAC (chillers, condenser-based systems) and your IT load (converted into tons of cooling needed) reveals your inefficiency. Continuing to drill down on efficiencies of the base HVAC systems themselves (more efficient motors, end-of-life units with more efficient ones, etc.) can further increase efficiency beyond airflow management techniques.

Today’s benefits of an HVAC-based PUE strategy

In the Green Grid’s 2012 analysis of PUE, they acknowledge that “Monitoring various components of the mechanical and electrical distribution will provide further insight as to the large energy consumers and where possible efficiency gains can be made (e.g., chillers, pumps, towers, PDUs, switchgear, etc.)” (p. 15). With the right strategy in place, more efficiency HVAC = more cooling capacity for increased IT loads.

Freeing up capacity allows our multi-tenant clients to host a greater number of customers on the existing white space using the cooling infrastructure already built into the facility. Any data center facility will benefit from improving cooling efficiency through resulting energy savings, lowered OpEx and improved facility operations. HVAC energy use vs IT load calculation can be one of the most useful ways to optimize data center facilities.

Related Posts

Discover more content and insights from Mantis Innovation

Meeting the Demand: The Impact of Data Centers on Energy Infrastructure

The Explosion of Data Centers Data centers have been a major topic of discussion in the energy industry for several years due to their high energy consumption. With aggressive expansion expected in

The Cost of Inaction: Why Businesses Should Act Now on Energy Efficiency

In today's fast-paced business environment, the financial and operational losses businesses incur by delaying energy efficiency improvements, the "cost of inaction," is more relevant than ever.

In today’s AI era, human intelligence is the key to data center facility and energy optimization

Nowhere else in modern industry do artificial and human intelligence converge with such transformative potential as in the world of data centers. As AI's extraordinary growth accelerates demand for

Your Guide to LED Lighting for Business and Commercial Buildings

Never to be underestimated, LED lighting and well-designed lighting retrofits and upgrades offer businesses big improvements like reduced energy costs, reduced emissions, and improved working